SAFE

Safer Autonomous Farming Equipment

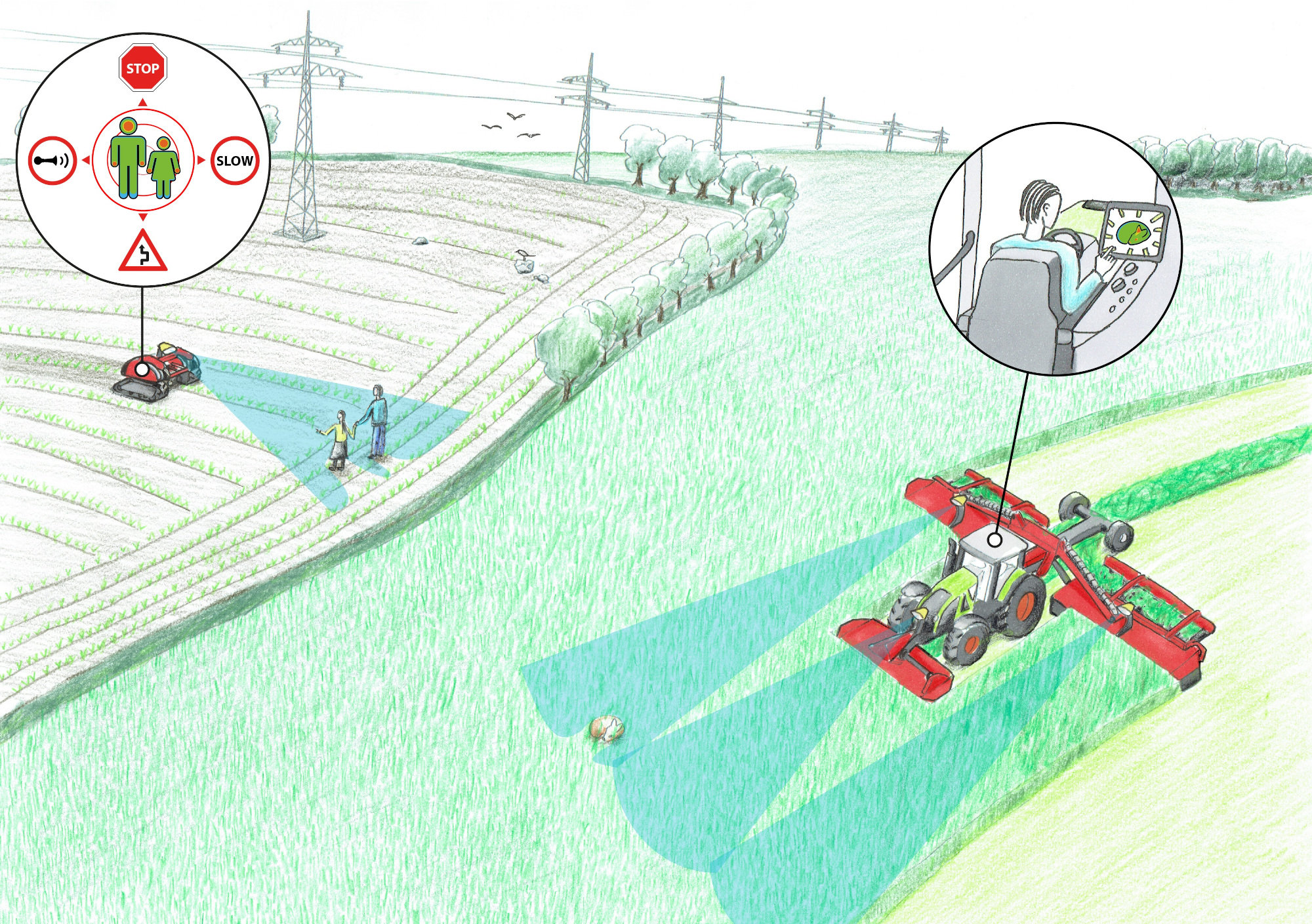

In their early carreers, Peter Christiansen and Mikkel Fly Kragh did their PhDs in safety for autonomous farming vehicles. The SAFE project was a joint research collaboration between two agricultural machine manufacturers, a robotic consulting firm, and two research institutions. The SAFE project sought to explore technologies for maximizing the safety of both humans and animals with autonomous farming vehicles, while minimizing the workload and supervision needed by farmers.

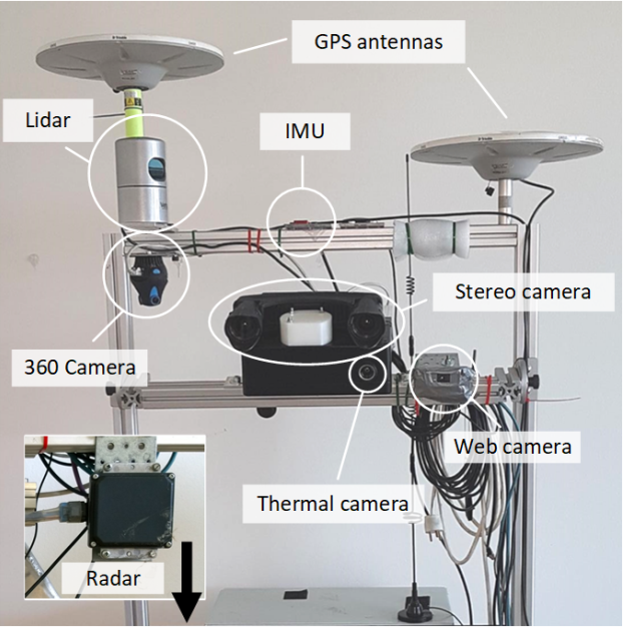

Peter’s and Mikkel’s role in the project was to explore the capabilities of a variety of sensor technologies, including cameras, lidar and radar, for reliable obstacle detection and avoidance in unstructured, agricultural environments. They designed and built an advanced perception system, synchronized and calibrated all sensors, and used the system for extensive data collection at seven different locations in Denmark. They published one of the datasets, FieldSAFE, which, to date, has been downloaded more than 10,000 times.

Detection algorithms

Several state-of-the-art detection algorithms were investigated. This included both traditional computer vision and deep learning for object detection (bounding box predictions), and fully convolutional neural networks for semantic segmentation (pixel-wise predictions).

Object detection

Semantic segmentation

_

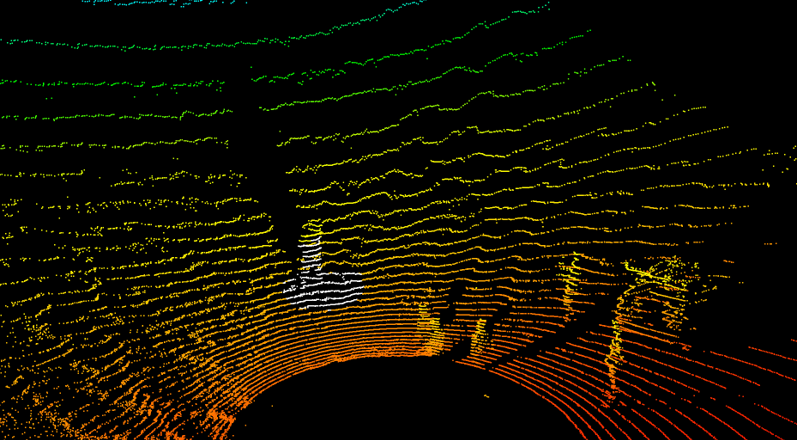

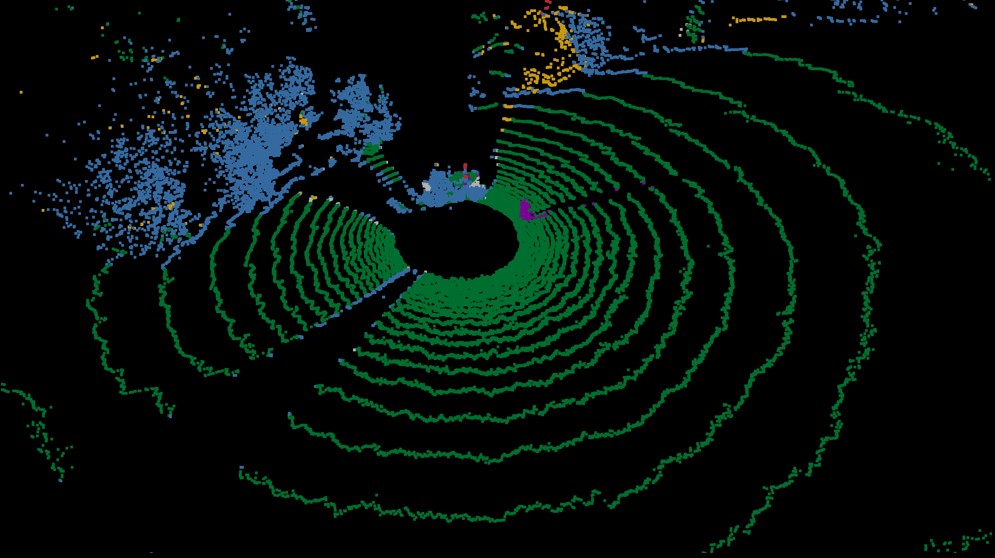

For 3D point clouds, either generated from stereo vision or directly available from a multi-beam lidar, we also compared traditional methods based on hand-crafted features with methods based on deep learning. Below, the traditional feature extraction is visualized on the left, illustrating all points in a neighborhood of a single 3D point. Based on features such as linearity, planarity, scatteredness, and height, each 3D point is classified using a support vector machine (SVM). On the right, predictions from a fully convolutional neural network operating on 2D range-images are shown. As of today, there is still debate on which representation of a 3D point cloud is best suited for deep learning. Some methods use multiple 2D views of a point cloud, some use a hierarchical voxel-based representation, some use graph neural networks, and some use transformer-based models.

Traditional feature extraction (neighborhood)

Deep learning (predictions)

Sensor fusion

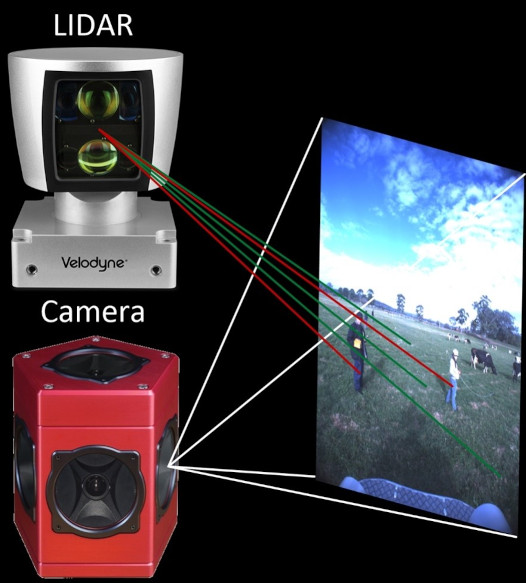

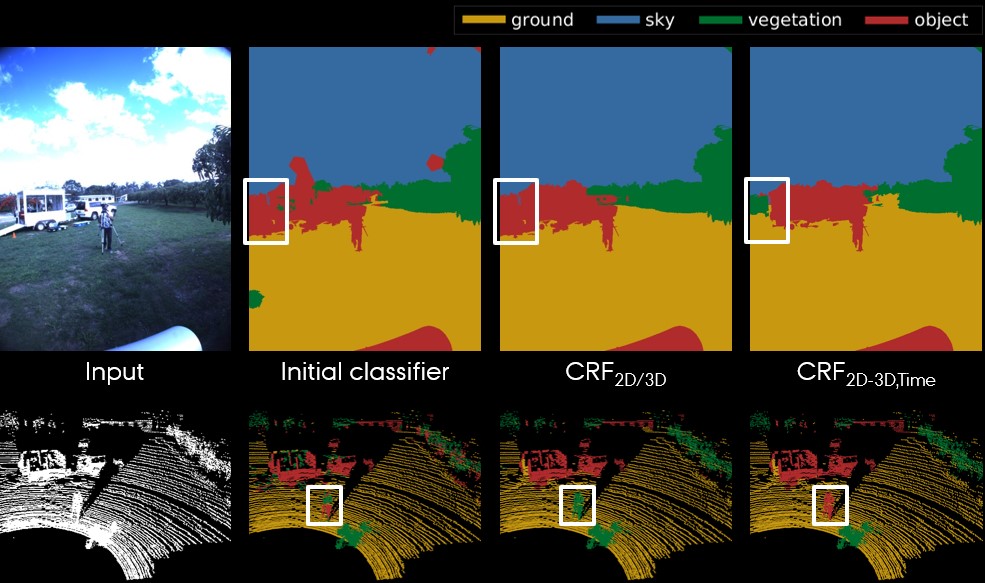

Sensor fusion or multi-modal fusion deals with the issue of combining sensor data from different domains in order to increase robustness and confidence. Combining multiple sensors should thus result in reduced uncertainty compared to individual sensor performances. Below, an example of lidar and camera fusion is shown. By utilizing the extrinsic calibration parameters between the sensors and the intrinsics of the camera, it is possible to project 3D points onto a corresponding 2D image. Using this approach, the image on the right shows pixel-wise and point-wise classification results using a conditional random field (CRF) for fusing. The white boxes indicate the qualitative performance improvements when fusing 2D and 3D information, and furthermore when fusing sensor data across subsequent frames (time).

Projection of 3D points

Sensor fusion results

Obstacle mapping

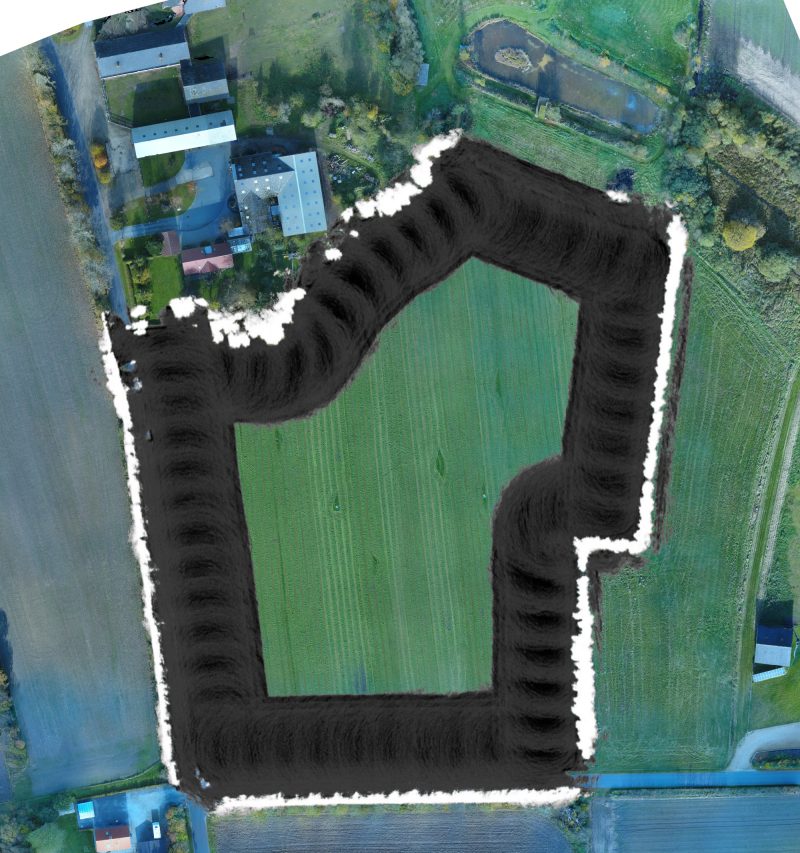

For an autonomous system to be safe, obstacle detection must be followed by obstacle avoidance. This includes, among other steps, a transformation of detections from local sensor frames to the vehicle frame, possibly followed by local or global mapping. For this, we investigated occupancy grid mapping combined with probabilistic fusion of inverse sensor models. That is, we transformed the detections from all sensors and accompanying algorithms to a 2D top-down view and fused these across both space and time. IMU and RTK GPS sensors were used for global localization. Below, we show a drone-recorded orthophoto of a grass field with and without an obstacle map overlaid. The obstacle map was generated based on a single traversal along the periferi of the field.