Robot safety

Safety first.

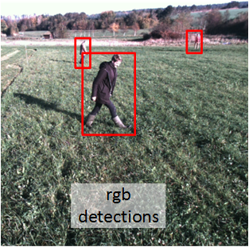

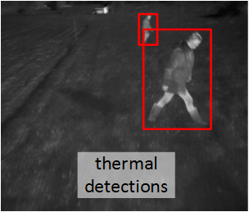

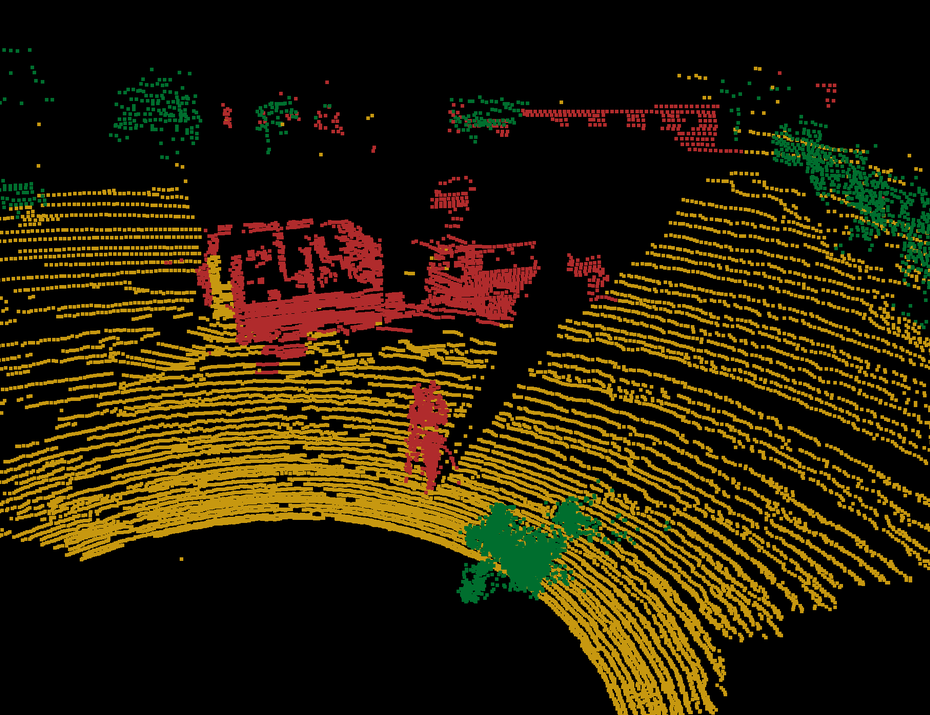

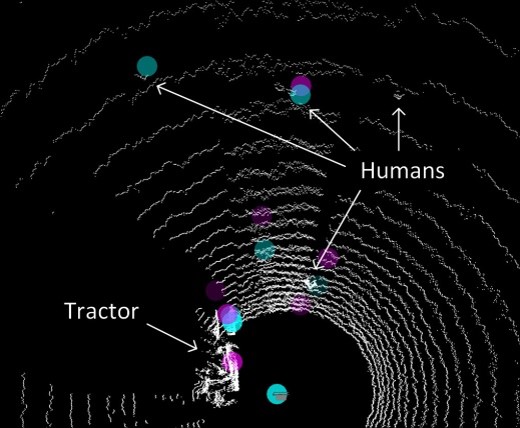

Automating agricultural processes using autonomous vehicles and robots has a huge potential for reducing manual labor and optimizing yield. However, self-driven vehicles pose a major safety risk. Therefore, the SAFE project investigated technologies for maximizing the safety of both humans and animals, using multiple perception sensors and state-of-the-art object detection algorithms.

A perception platform was developed including color camera, thermal camera, stereo camera, lidar and radar. A variety of datasets were collected, including the popular and publicly available dataset: FieldSAFE.

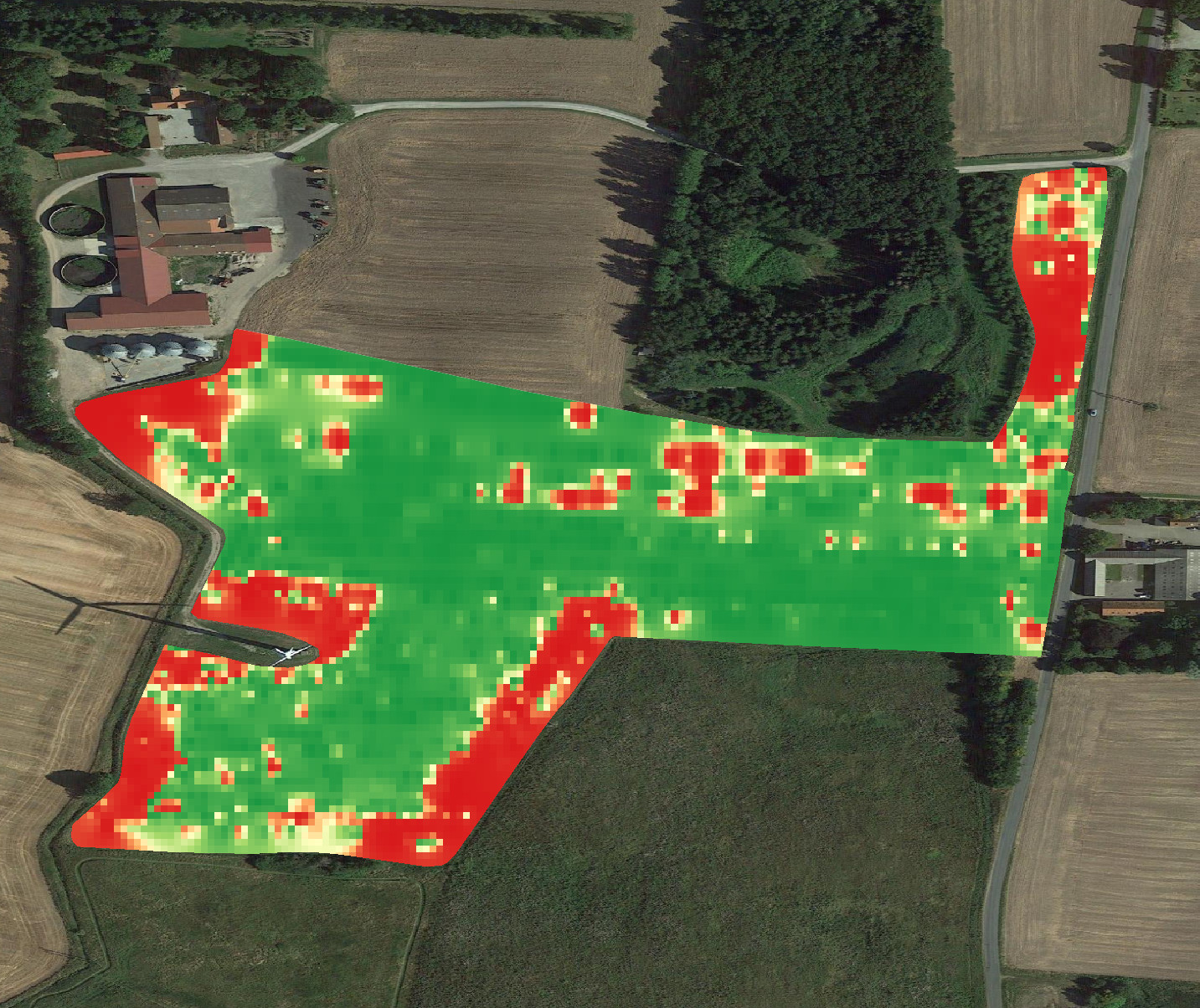

Weed mapping

With camera technology and the latest development in artificial intelligence and big data, we can accurately map weeds in the field.

With weed maps, it is possible to address one of the key elements of precision agriculture — to only act where necessary and thus reduce the environmental impact and benefit the farmer’s economy.

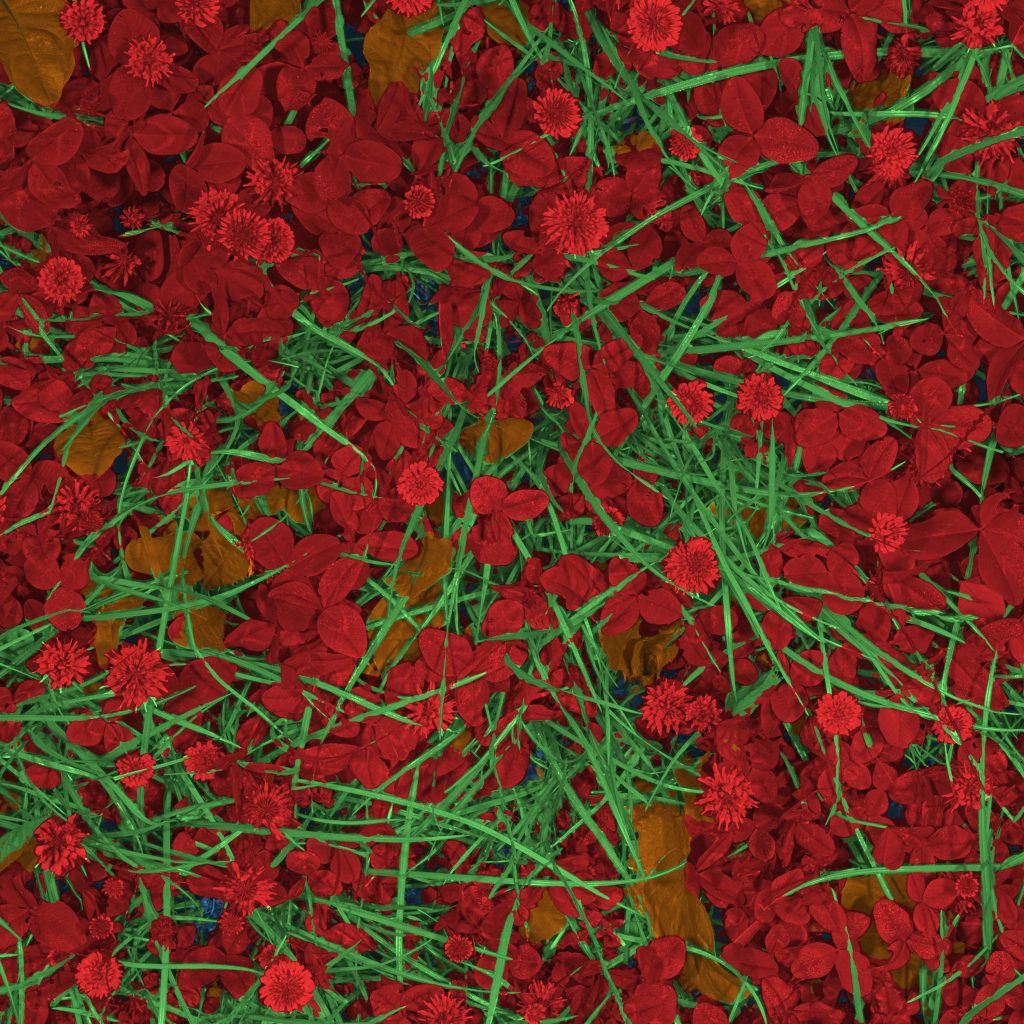

The automated mapping of weeds works both for grasses in high-value crops, as well as mono and dicotyledonous weeds in cereal.

Hover the mouse over the image to see the automatic detection of weeds

Tab on the image to see the automatic detection of weeds

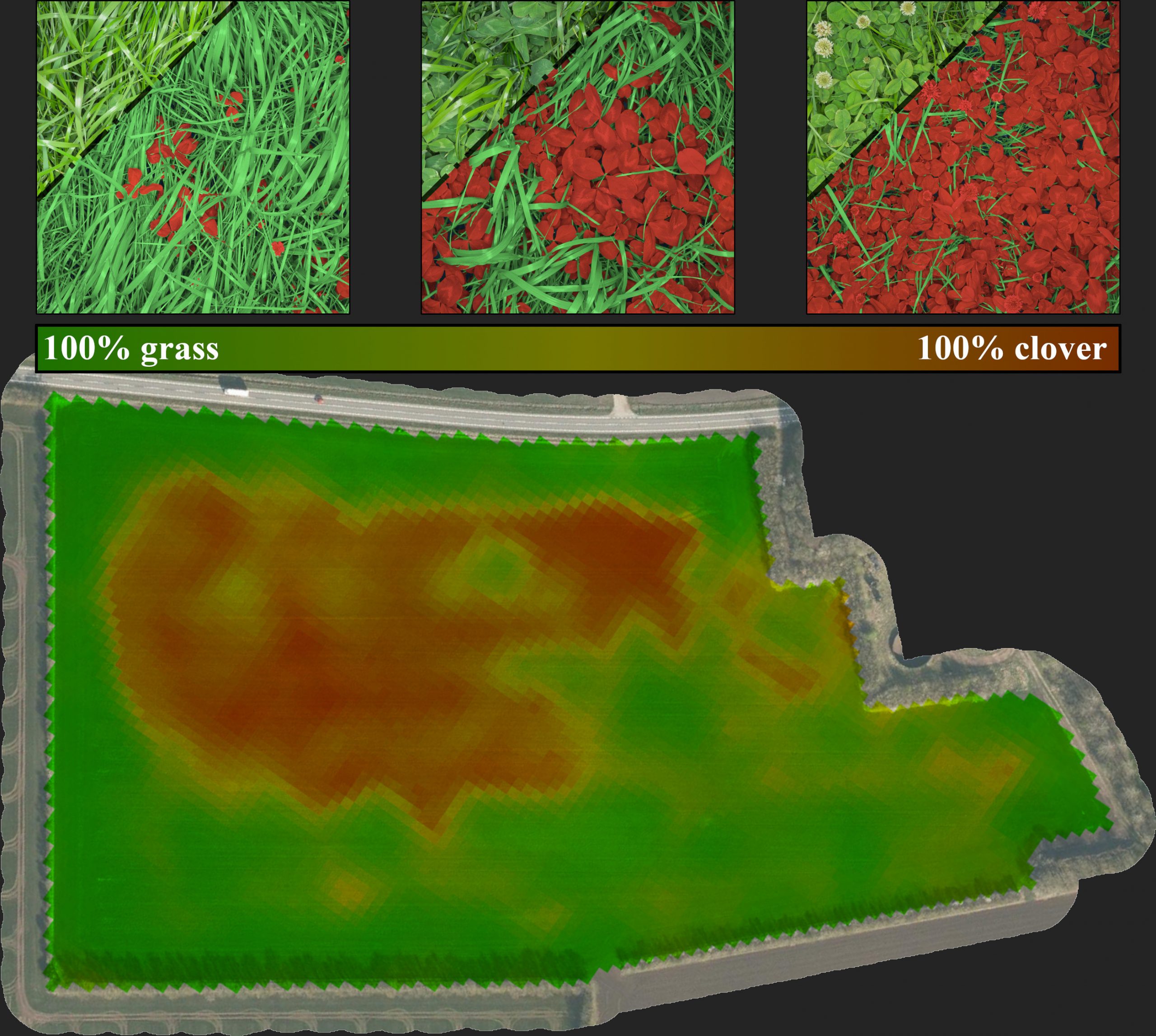

Mixed crop mapping

Precision agriculture in mixed crops is often conditional on the local species competition in the field. With camera technology and artificial intelligence, the otherwise unfeasible task of monitoring the local species distribution can be automated with excellence and scale.

Demonstrated in the images is the case of legume content prediction in annual forage mixtures of rye grass and clovers. Here, the legume content is a valuable metric for predicting the crop quality as well as optimizing the fertilization strategies.

Litter detection and mapping

The CamAlien camera system was developed for detecting invasive plant species but it can also recognize roadside litter. When installed on vehicles, CamAlien identifies litter during transit, generating maps highlighting concentrated litter areas to optimize cleanup efforts.

CROPDRONE

Use drones to scan your crops! In the CROPDRONE-project we mount our high-res cameras on long-range drones and scan and map crops automatically.

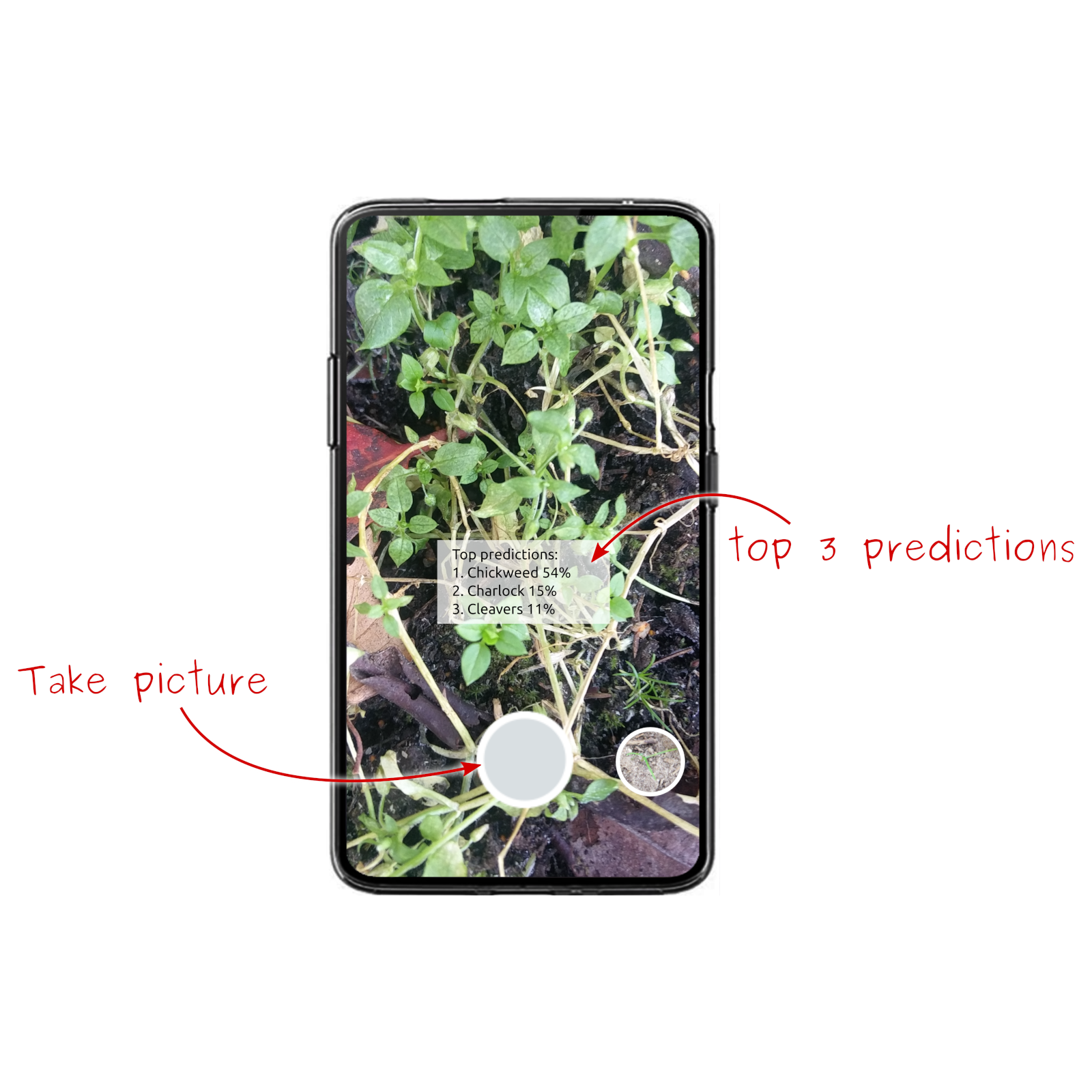

Weedr

Weedr is a mobile application for weed recognition. Weedr builds on the latest technology in artificial intelligence and acts as a modern weed identification key that instantly tells you which weeds have been photographed.

Simply point the phone at the plants you want recognized. In an instant, you get three possible suggestions, arranged according to their probability.

Weed Beeter

Knowledge of the location of plants is required to control weeds efficiently with a minimum impact. Weed Beeter is a system that can detect weeds in sugar beets and support the development of intelligent weed control applications.

Weed Beeter has several detection and classification systems that can be used depending on the desired weed control strategy. It can:

- Detect sugar beets and weeds

- Estimate the plant cover

- Estimate the plants’ locations

- Locate the stems of sugar beets.

Detecting plants’ locations and cover can be used to control weeds using means that requires detecting the weeds’ canopy, such as precision sprayers. Detecting the stems makes it possible to do mechanical weeding under the crop leaves, which can be used for controlling weeding robots.

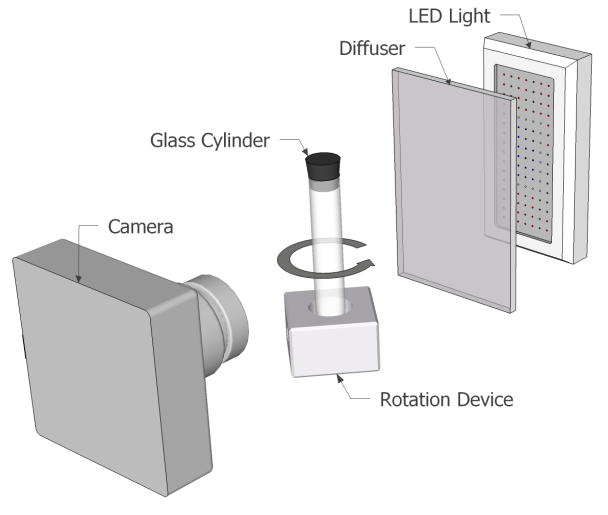

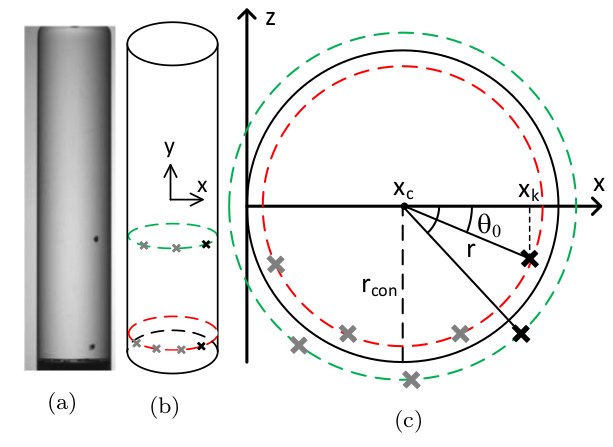

3D Vision AI for Automated Impurity Inspection

In pharmaceutical manufacturing, 2D inspection systems often struggle to distinguish between critical internal impurities and harmless external dirt. This lack of depth perception leads to high false-reject rates and significant product waste.

Partnering with a leading Pharmaceutical Inspection OEM, we developed a Vision AI solution that utilizes rotation physics to extract 3D positioning from 2D cameras. This allows the system to precisely locate particles, ensuring only truly contaminated containers are rejected.

By moving from 2D detection to 3D positioning, we revolutionized the quality assurance process for transparent containers:

- 85% System Accuracy: Successfully differentiates internal contaminants from external surface dirt.

- High-Speed Processing: Real-time analysis completed in just 1 second per container.

- Reduced Waste: Significantly lowers false rejection rates, improving the manufacturing bottom line.

Based on the project, we published a peer-reviewed paper: “3D impurity inspection of cylindrical transparent containers” in Measurement Science and Technology.

CasesMikkel Fly Kragh2026-02-20T10:50:22+00:00